Nickel-Based Superalloys: Part Two

Abstract

Creep resistance is dependent on slowing the speed of dislocations within the crystal structure. In Ni-base superalloys the gamma prime phase [Ni3 (Al, Ti)] present acts as a coherent barrier to dislocation motion and is a precipitate strengthener. Chemical additions such as aluminum and titanium promote the creation of the gamma prime phase.

Applications of superalloys include gas turbines, aero engine turbines, space vehicles, submarines, nuclear reactors, military electric motors; chemical processing vessels, and heat exchanger tubing.

Creep resistance is dependent on slowing the speed of dislocations within the crystal structure. In Ni-base superalloys the gamma prime phase [Ni3 (Al, Ti)] present acts as a coherent barrier to dislocation motion and is a precipitate strengthener. Chemical additions such as aluminum and titanium promote the creation of the gamma prime phase.

The gamma prime phase size can be precisely controlled by careful precipitation hardening heat treatments. Many superalloys have a two phase heat treatment which creates a dispersion of square gamma prime particles known as the primary phase with a fine dispersion between these known as secondary gamma prime.

Many other elements, both common and exotic, including not only metals, but also metalloids and nonmetals can be present; chromium, cobalt, molybdenum, tungsten, tantalum, aluminum, titanium, zirconium, niobium, rhenium, carbon, boron or hafnium are just a few examples. Cobalt base superalloys do not have a strengthening secondary phase like gamma prime.

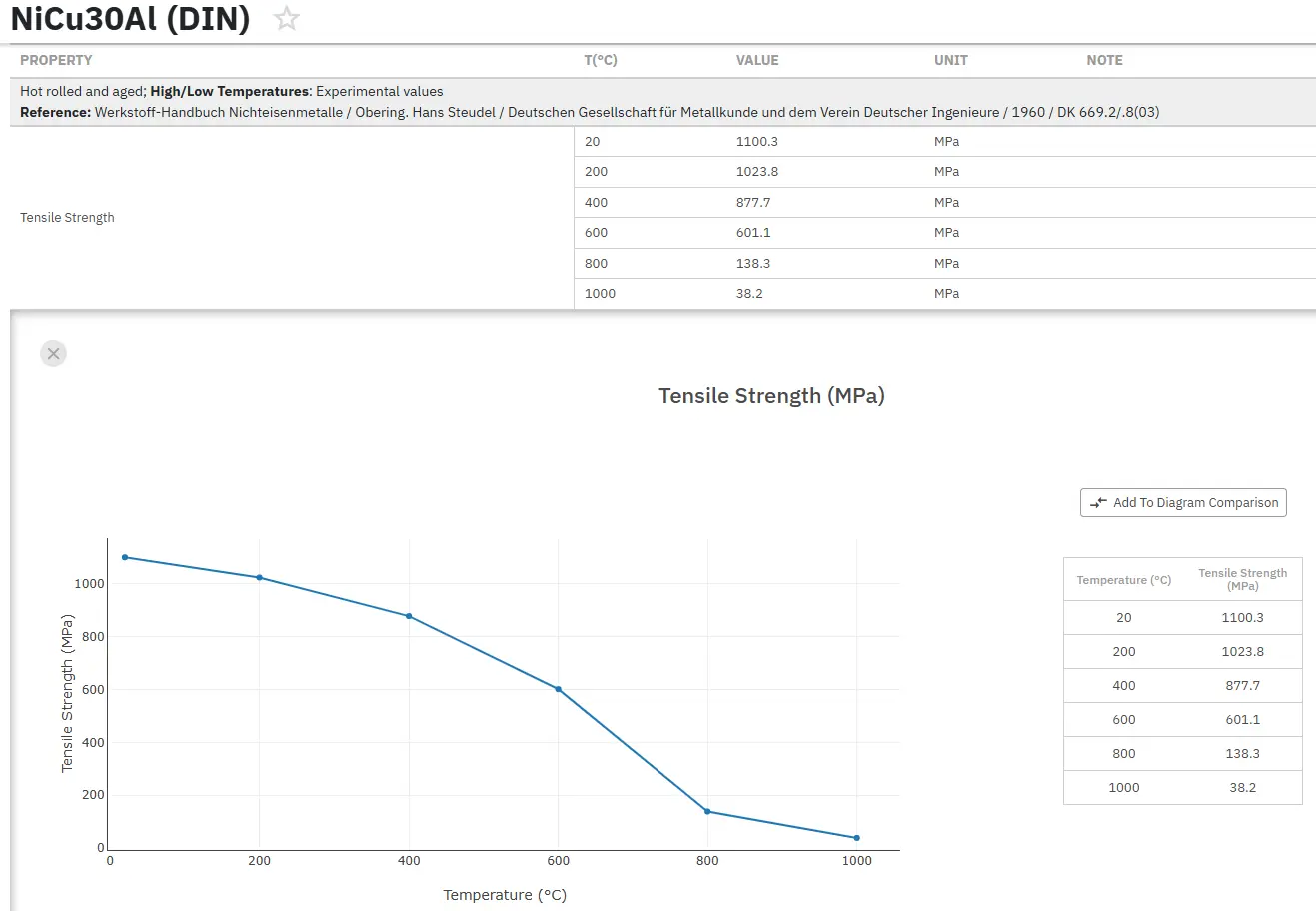

The strength of most metals decreases as the temperature is increased, simply because assistance from thermal activation makes it easier for dislocations to surmount obstacles. However, nickel based super alloys containing γ', which essentially is an intermetallic compound based on the formula Ni3 (Al, Ti), are particularly resistant to temperature.

Ordinary slip in both γ and γ' occurs on the {111} <110>. If slip was confined to these planes at all temperatures then the strength would decrease as the temperature is raised. However, there is a tendency for dislocations in γ' to cross-slip on to the {100} planes where they have a lower anti-phase domain boundary energy. This is because the energy decreases with temperature.

Situations arise where the extended dislocation is then partly on the close-packed plane and partly on the cube plane. Such a dislocation becomes locked, leading to an increase in strength. The strength only decreases beyond about 600°C whence the thermal activation is sufficiently violent to allow the dislocations to overcome the obstacles. To summarize, it is the presence of γ' which is responsible for the fact that the strength of nickel based superalloys is relatively insensitive to temperature.

When greater strength is required at lower temperatures (e.g. turbine discs), alloys can be strengthened using another phase known as γ''. This phase occurs in nickel superalloys with significant additions of niobium (Inconel 718) or vanadium; the composition of the γ'' is then Ni3Nb or Ni3V.

Commercial super alloys contain more than just Ni, Al and Ti. Chromium and aluminum are essential for oxidation resistance small quantities of yttrium help the oxide scale to cohere to the substrate. Polycrystalline superalloys contain grain boundary strengthening elements such as boron and zirconium, which segregate to the boundaries. The resulting reduction in grain boundary energy is associated with better creep strength and ductility when the mechanism of failure involves grain decohesion.

There are also the carbide formers (C, Cr, Mo, W, C, Nb, Ta, Ti and Hf). The carbides tend to precipitate at grain boundaries and hence reduce the tendency for grain. The particles of γ'' are in the form of discs with (001) γ’’|| {001} γ and [100] γ’’||<100>γ boundary sliding.

Elements such as cobalt, iron, chromium, niobium, tantalum, molybdenum, tungsten, vanadium, titanium and aluminum are also solid-solution strengtheners, both in γ and γ'. There are, naturally, limits to the concentrations that can be added without inducing precipitation. It is particularly important to avoid certain embrittling phases such as Laves and Sigma. There are no simple rules governing the critical concentrations; it is best to calculate or measure the appropriate part of a phase diagram.

As mentioned earlier, superalloys are commonly used in gas turbine engines in regions that are subject to high temperatures which require high strength, excellent creep resistance, as well as corrosion and oxidation resistance. In most turbine engines this is in the high pressure turbine, blades here can face temperatures approaching if not beyond their melting temperature. Thermal barrier coatings (TBCs) play an important role in blades allowing them to operate under such conditions, protecting the base material from the thermal effects as well as corrosion and oxidation.

A major use of nickel based superalloys is in the manufacture of aero engine turbine blades. A single-crystal blade is free from γ/γ grain boundaries. Boundaries are easy diffusion paths and therefore reduce the resistance of the material to creep deformation. The directionally solidified columnar grain structure has many γ grains, but the boundaries are mostly parallel to the major stress axis; the performance of such blades is not as good as the single-crystal blades. However, they are much better than the blade with the equiaxed grain structure which has the worst creep life.

Additional applications of superalloys include the following: gas turbines (commercial and military aircraft, power generation, and marine propulsion); space vehicles; submarines; nuclear reactors; military electric motors; chemical processing vessels, and heat exchanger tubing.

Nickel based superalloy blades are generally made using an investment casting process. A wax model is made, around which a ceramic is poured to make the mould. The wax is removed from the solid ceramic and molten metal poured in to fill the mould. The actual process can more complicated depending on the intricate shapes of the objects to be produced.

Read more

Access Precise Properties of Nickel and Superalloys Now!

Total Materia Horizon contains property information for 20,000+ nickel alloys: composition, mechanical and physical properties on various temperatures, corrosion resistance, nonlinear properties and much more.

Get a FREE test account at Total Materia Horizon and join a community of over 500,000 users from more than 120 countries.